Introduction

The development of oncology chatbots shows the need for artificial intelligence (AI) systems that focus on people’s needs. These systems should provide empathy, accurate information, and personalized support to patients. Cancer patients and their families often face emotional stress and need reliable information, so chatbots can help answer their questions and provide guidance.

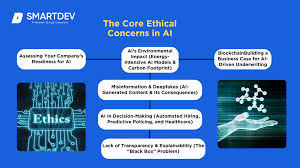

However, as advanced AI models such as GPT-3 and GPT-4 become more common in health care, ethical issues become more important. Oncology chatbots must follow ethical principles such as fairness, transparency, accountability, and protection of patient privacy. They should also avoid bias and serve all users fairly, including people from underrepresented communities.

Human-centered AI in oncology focuses on building systems that support both patients and health-care providers. Ethical AI should protect the dignity, rights, and well-being of patients. This includes building trust, protecting patient data, and providing correct and unbiased information.

This review studies how generative AI and large language models are used in oncology chatbots. It highlights the importance of customizing chatbots to meet the needs of different users so they can provide more supportive and patient-focused care.

The main goal of this study is to examine the ethical challenges of using AI in sensitive healthcare areas like cancer treatment. The study also looks at bias in AI training data, which can lead to unfair or incorrect results.

The paper identifies key ethical problems in oncology chatbots and suggests solutions. These include using more diverse training data, monitoring AI systems regularly, and improving training methods. These steps can help make AI tools in oncology more effective and ethical.

Overall, this study provides a focused discussion on the ethical use of AI in oncology chatbots and offers practical recommendations to improve their development and use in health care.

State of the art in AI ethics

As AI systems are used more and more, it is very important to make sure they are clear, safe, and trustworthy. Because AI raises many ethical questions, there is a growing need to regulate how these systems are made and used. This helps people and society benefit from AI while making sure developers take responsibility for their work. The rules for using and creating AI are mostly based on ethical ideas, principles, and respect for human values.

Addressing the Challenge of AI Hallucinations in High-Stakes Domains

Artificial intelligence (AI) hallucinations, where models produce plausible but incorrect or fabricated outputs, pose significant challenges for high-stakes applications such as healthcare, scientific writing, and legal decision-making. These errors arise from limitations in large language model (LLM) training data, which may contain inaccuracies, biases, or irrelevant information. Hallucinations can occur in closed-domain settings, where errors are easier to detect, or in open-domain contexts, which are more complex and harder to verify. Mitigating these risks requires robust training on accurate data, fact-checking mechanisms, transparency, and continuous monitoring of AI outputs. Collaborative efforts among developers, users, and regulators, coupled with education on AI limitations, are essential to ensure trustworthy, reliable, and ethically aligned AI systems across critical domains.

Is there a requirement to create new ethical theories or guidelines that consider AI’s non-human emotional capabilities, beyond the scope of traditional human-centered ethics?

The rise of AI ethics forces us to confront philosophical tensions, particularly the boundary between human and non-human. As AI systems increasingly replicate human abilities such as reasoning, language, and creativity, traditional markers of humanness are challenged, revealing the need for a broader, pluralistic conception of human worth that recognizes diverse abilities and identities. Autonomy is another key concern, as highly autonomous AI in domains like healthcare and defense raises ethical questions about the limits of machine decision-making and the necessity of human-centered safeguards. However, human-centeredness is not neutral—it can reflect societal biases, privileging certain groups or species while reproducing discrimination against marginalized people or non-human animals. Anthropocentric assumptions often underpin AI design, risking unethical outcomes if left unexamined. This paper proposes a nuanced approach, viewing human-centeredness as a spectrum rather than a binary, allowing for the integration of human values while mitigating harms of anthropocentrism. By distinguishing human-centeredness from anthropocentrism, the paper emphasizes ethical AI that considers the moral standing of diverse human groups, marginalized populations, non-human animals, and the broader environment, ultimately promoting fairness, inclusivity, and responsible AI deployment.

CONCLUSION

The content of ethical standards is often interpreted as exclusively a matter of fairness, which is primarily taken to be a relational concern with how some people are treated compared with others. Illustrations of AI-based technology that raise fairness concerns include facial recognition technology that systematically disadvantages darker-skinned people or automated resume screening tools that are biased against women because the respective algorithms were trained on data sets that are demographically unrepresentative or that reflect historically sexist hiring practices. “Algorithmic unfairness” is a vitally important matter, especially when it exacerbates the condition of members of already unjustly disadvantaged groups. But this should not obscure the fact that ethics also encompasses nonrelational concerns such as whether, for example, facial recognition technology should be deployed at all in light of privacy rights or whether it is disrespectful to job applicants in general to rank their resumes by means of an automated process.

SOURCES

1.https://link.springer.com/article/10.1007/s11245-025-10324-y

2.https://www.amacad.org/publication/daedalus/artificial-intelligence-humanistic-ethics

3.https://link.springer.com/article/10.1007/s11245-025-10324-y

4.https://www.unesco.org/en/artificial-intelligence/recommendation-ethics